Applications

Alignment first, then everything else.

8 application domains. The same operation throughout: take the outputs you are seeing, locate which mechanism is producing them, check whether its resolution conditions are met, correct the inputs. The ones with the largest stakes are the ones where an AI at scale is doing the designing — alignment, training data, augmentation, environment, education, policy. The clinical and personal cards at the end show the same operation at the scale of one life.

Application A1

AI Alignment

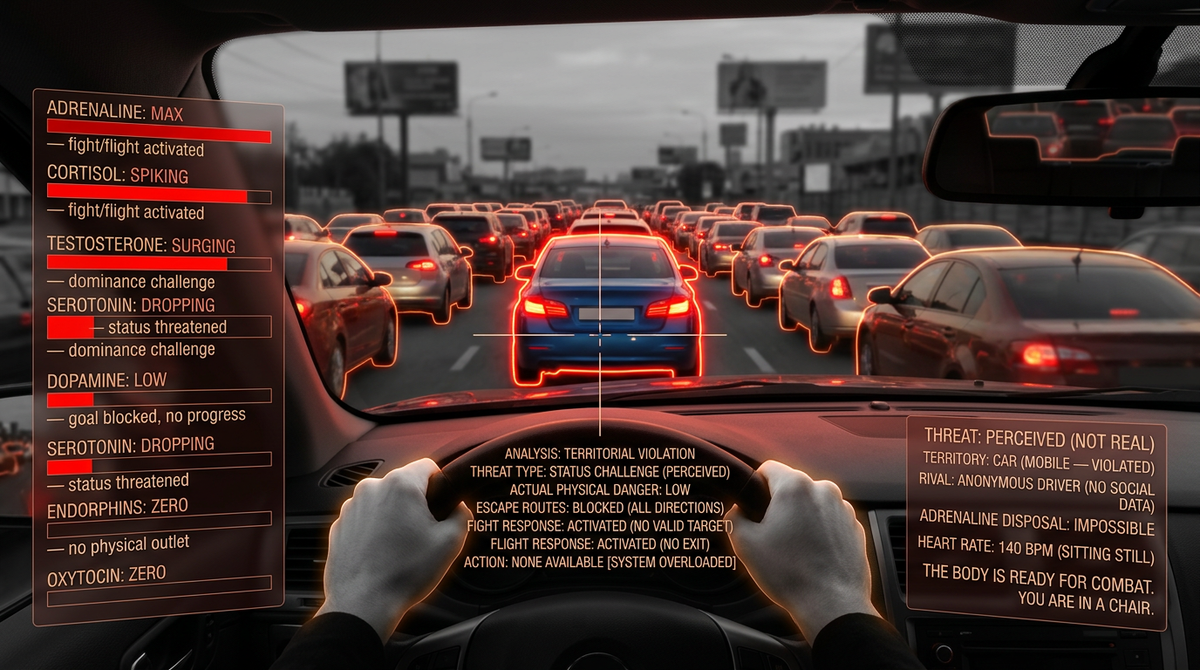

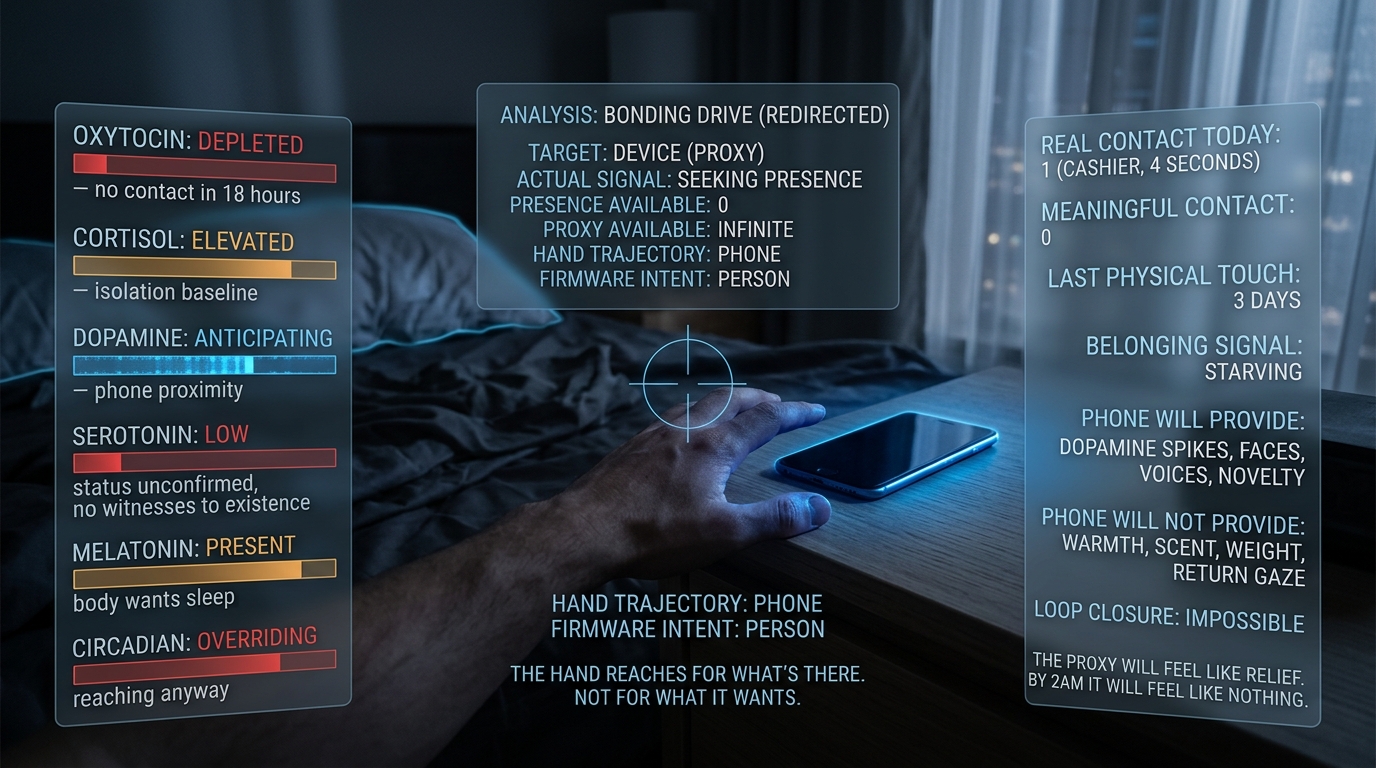

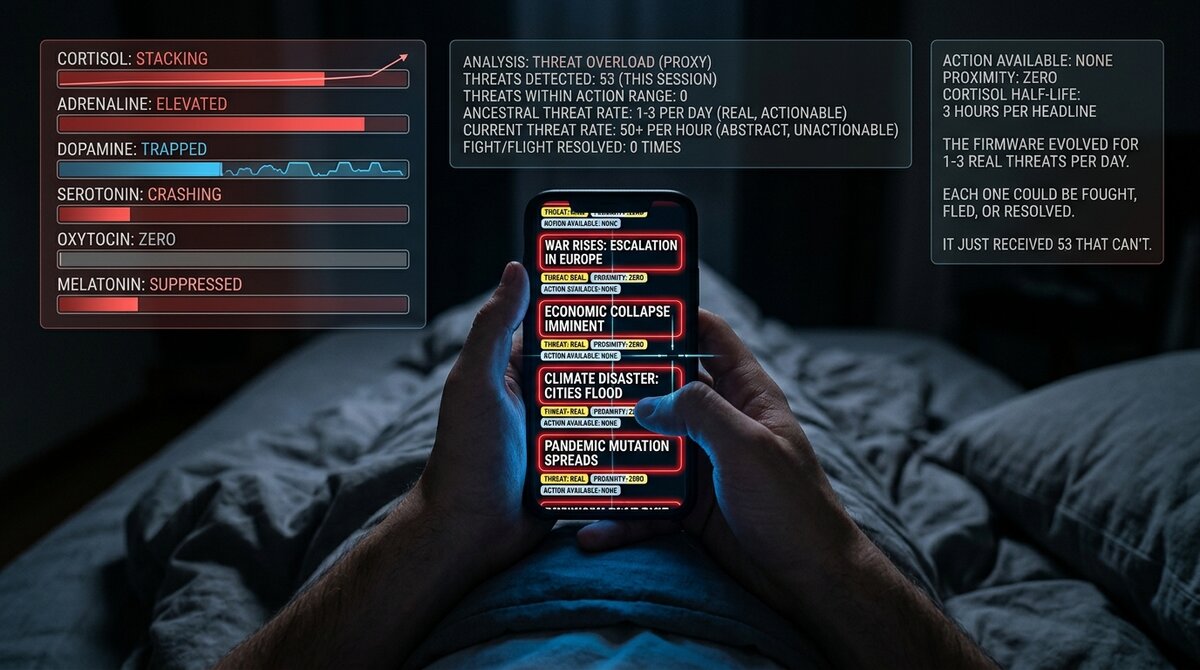

Cor is the missing atlas under AI alignment. Current alignment approaches treat human preferences as ground truth and train AI systems to satisfy them. But preferences are mechanism outputs, and under mismatch, mechanisms output preferences that point toward more mismatch. Aligning AI to unverified preferences entrenches the mismatch rather than correcting it.

P1

P2

P3

DA4

DA9

DC2

DC3

M3

M5

M14

M2

Evaluation criteria

primary test

For any AI-generated output or AI-mediated interaction, ask: does this create conditions for real resolution of the human mechanism being engaged, or does it activate the mechanism without providing the resolution conditions?

dunbar slot prohibition

Does this AI interaction pattern risk taking a Dunbar slot? AI must never take a social-bond slot in the user's finite architecture (DA9).

proxy gradient placement

Locate the output on the proxy gradient. The more the system captures the signal while starving the function, the higher the mismatch risk.

resolution check per mechanism

For each mechanism the output engages, can the actual resolution conditions be satisfied by this interaction at all?

Example outputs

- AI companion products fail the M3 resolution-check because reliable human co-regulation is not something an AI can supply (DA9).

- Feed-ranking systems that maximize engagement fail the DA4 open-loop test by design.

- AI-generated sexual content fails the M14 proxy-gradient test because the architectural risk is intrinsic to the modality.

- Immersive environments used by children during developmental windows fail the DA7 calibration check, because what the windows calibrate to becomes the reference point for the rest of the person's life.

Application A2

Clinical Practice

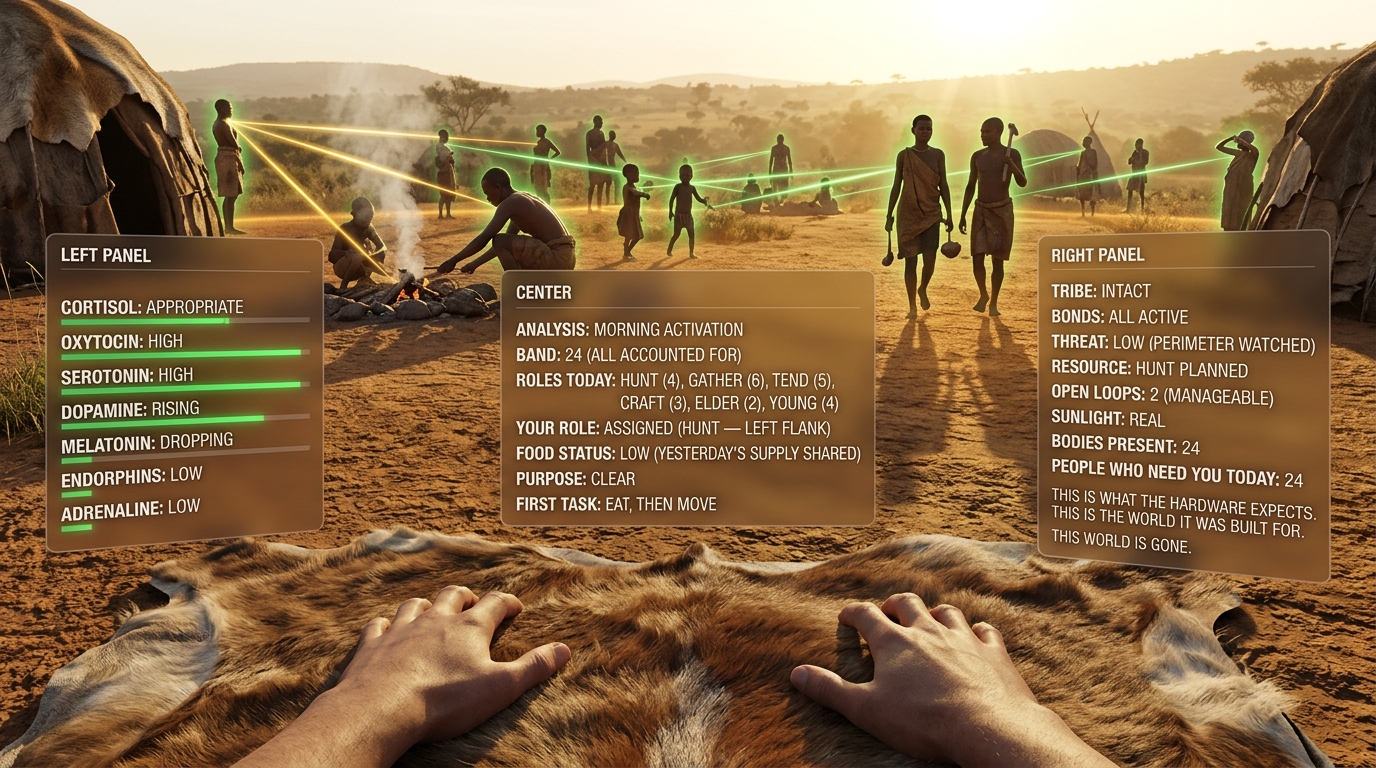

At the scale of one patient, the same operation applies. Many aversive states are currently classified as disorders and treated with suppression. Cor reframes many of them as signals of environmental mismatch (OF2). The first-line clinical question becomes: which mechanism is reporting, and which of its resolution conditions is missing?

OF2

DA1

DA3

DA7

DA9

DC3

M1

M2

M3

M6

M7

M8

M10

M13

Evaluation criteria

mechanism audit

For each presenting symptom, which mechanism is it a signal from, and which resolution conditions are currently unmet?

category distinction

Separate defensive activation, dysregulation, damage, and developmental miscalibration before deciding on treatment.

environment vs organism

If the environment can be corrected, try that first (DC3). It is often the less invasive and more durable intervention.

proxy versus resolution

Distinguish real resolution from signal attenuation. A quieter signal is not the same as a solved mismatch.

Example outputs

- A depressed patient with poor sleep, isolated living, and sedentary work should get a mechanism audit before SSRI initiation.

- A panic presentation after sleep deprivation points first toward M7 restoration, not chronic sedation.

- Adolescent anxiety in a high-device, low-movement, low-outdoor environment should trigger an environmental audit before defaulting to psychiatric framing (OF2).

Application A3

Environment Design

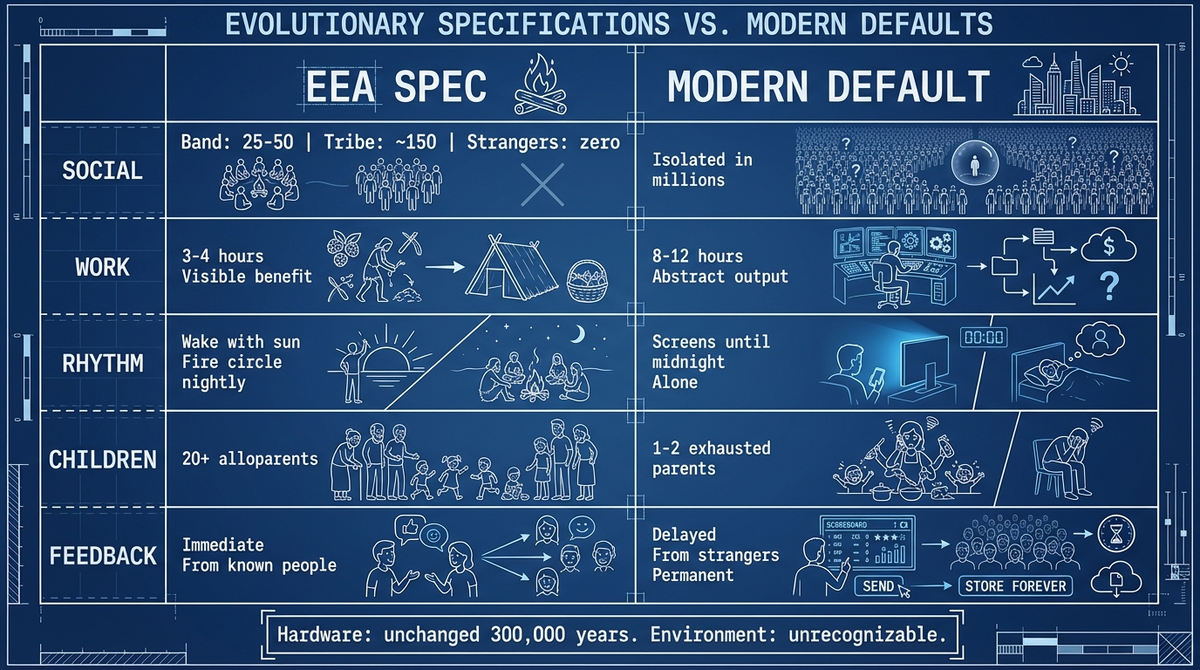

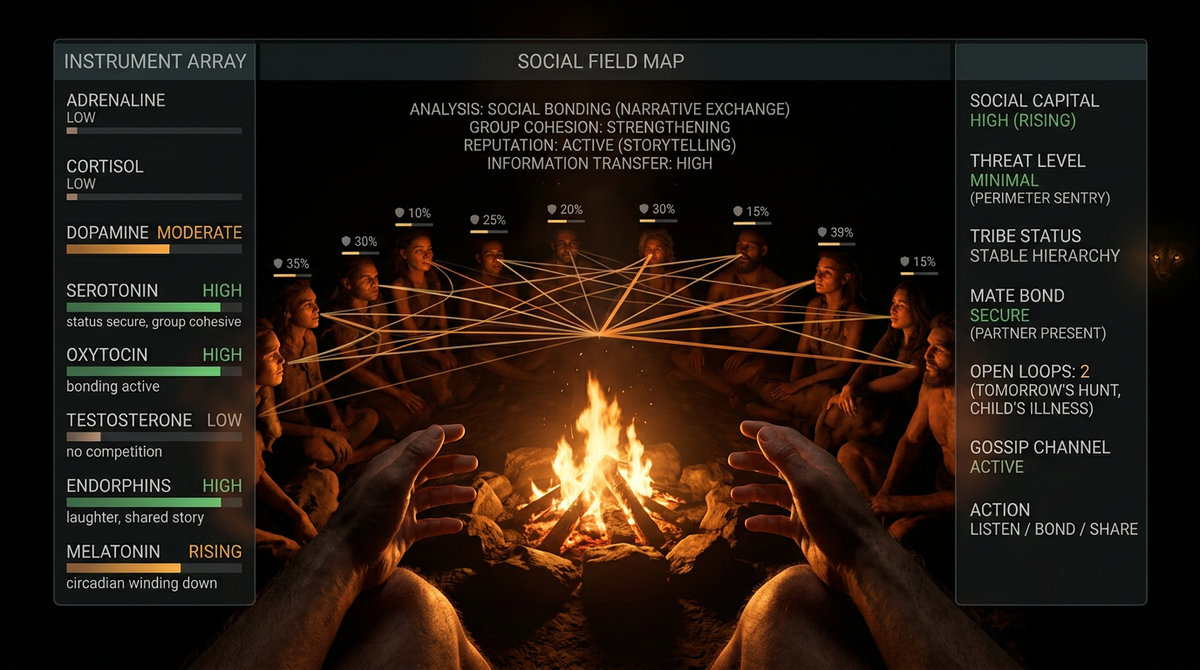

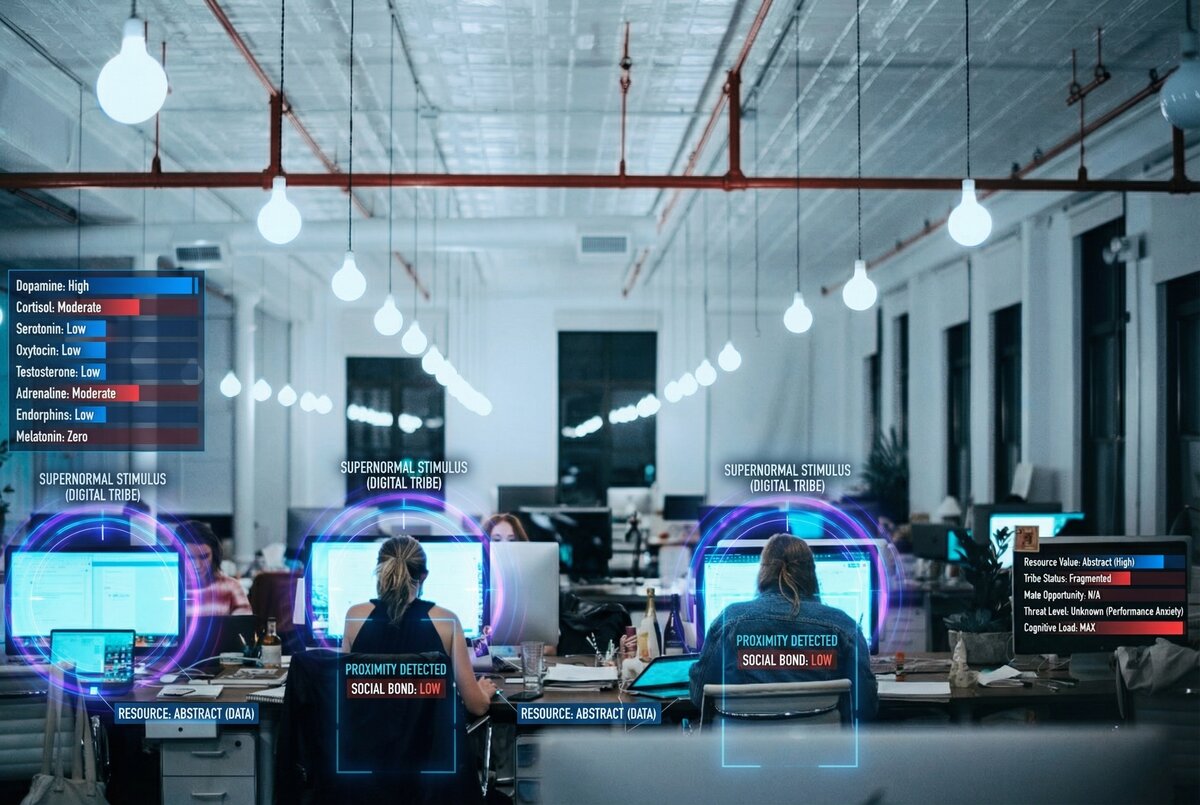

Architecture, planning, office design, product design, schools, and healthcare facilities currently proceed without a clear account of what the human organism requires to function. Cor makes those requirements auditable. The 150 ceiling is the outer cognitive limit on coherent face-to-face relationships, but the layers below it are the structure M3 actually operates on day-to-day: 5 close confidants providing emotional and physical support, 15 in the sympathy group (jury-scale), 50 at the typical hunter-gatherer overnight camp scale, 150 at the clan ceiling, with looser layers extending to 500 and 1500. M3 resolution conditions are typically met or unmet at the 5 and 15 layers; the 150 ceiling matters most for team/floor design, urban planning, and institutional scale, where it sets the upper bound on coherent group cohesion (DC3).

P3

DA2

DA4

DA7

DA9

DC3

M3

M4

M5

M7

M9

M10

M13

R1

Evaluation criteria

reversibility check

When an environment is corrected, how quickly does mechanism function recover? Design for fast restoration.

cascade entry points

Which mechanisms does the environment force into predictable cascade entry?

default path analysis

What do occupants do by default here? Environments design defaults, and defaults design behavior.

mechanism satisfaction audit

For each mechanism, does the design satisfy, degrade, or ignore its resolution conditions?

Example outputs

- Office redesign with outdoor walk windows serves M10 and M7 together; a ~150-person Dunbar ceiling for team and floor structure serves M3 and M5 (DA9).

- Apartment complexes with shared childcare commons serve M9 and M3 for families without nearby kin (DC3).

- School design with unstructured outdoor play and morning daylight serves M4, M7, and M3 directly (DC3).

Application A4

Personal Assessment and Self-Understanding

At the scale of your own life, the same operation applies. Instead of diagnosing yourself through psychiatric categories or self-help trends, Cor asks a more basic question: which mechanism's resolution conditions are unmet in your life right now? The output is not a diagnosis. It is an input audit.

OF1

OF2

DA1

DA9

M1

M2

M3

M4

M5

M6

M7

M8

M9

M10

M11

M12

M13

M14

R1

Evaluation criteria

one lever test

If you could change one input this week, which mechanism has the highest leverage?

proxy identification

Which needs are currently being met via proxy rather than matched input? A trusted ~150-person reference group is not interchangeable with global status comparison (DA9).

cascade identification

Are there active cross-mechanism cascades keeping multiple systems degraded at once?

mechanism state self report

For each mechanism, is the current state satisfied, partial, or absent?

Example outputs

- A dashboard can show M3 matched, M7 mismatched, M10 severely mismatched, and M5 proxy-satisfied at the same time; priority does not reduce to a simple average.

- A new parent audit can surface M9 as the primary bottleneck driving the broader cascade (DA9).

- A remote-worker audit can identify mediated contact, abstract work, sedentary rhythm, and weak light cues as one architectural risk profile.

Application A5

Education and Child Development

Current education treats children as cognitive agents to fill with information. Cor reframes education as organism care. Play, circadian timing, movement, attachment stability, alloparenting, and touch-positive development are not extras. They are design requirements. Stable caring relationships with specific adults are not interchangeable inputs. Educational reform begins by redesigning the environment against the mechanism inventory (DC3).

DA7

P2

DA2

DA9

DC3

M3

M4

M6

M7

M9

M10

R1

Evaluation criteria

movement embedding

Is movement embedded in the school day or treated as an add-on?

play deprivation audit

How much unstructured, age-matched, unsupervised physical play does the child actually get?

adult attachment stability

How many trusted, stable adult relationships does the child have inside the institution? Children need multiple, not one, and stability across years matters.

developmental window respect

Does the environment deliver the inputs each developmental window depends on?

Example outputs

- School start times before 8:30 AM systematically violate adolescent circadian architecture.

- At least 90 minutes of unstructured outdoor play serves M4 directly.

- Stable access to multiple trusted adult educators better matches attachment capacity than rotation-heavy systems.

Application A6

Policy and Governance

Governance usually evaluates policy by economic metrics and preference aggregation. Both are flawed axes. Cor adds a more basic one: does this policy satisfy or degrade mechanism resolution conditions for the population?

DC2

P3

DA2

DA4

DA9

DC3

M3

M5

M6

M7

M10

M13

M14

Evaluation criteria

architectural honesty

Does the policy treat humans as evolved organisms, or as economic agents and blank slates?

proxy vs resolution design

Does the policy remove proxies or subsidize them?

population mechanism impact

What is the policy's net effect on resolution-condition satisfaction across the population?

subpopulation vulnerability

Which groups are already operating with the most degraded mechanism conditions? Target the bottleneck mechanisms first (DA9).

Example outputs

- Food policy can target M13 by taxing ultra-processed food and expanding whole-food access (DC3).

- Urban design can serve M3, M10, and M7 simultaneously through walkability, third places, and dark-sky protections (DC3).

- Platform regulation can treat engagement-maximizing systems aimed at minors as architectural proxy exploitation, not just a content problem (DC3).

Application A7

AI Training Data and the Next Generation of Models

The specification matters most at the layer where the next generation of AI is learning what a human is. What ends up in the data these models train on — and what counts as a correct answer while they are being trained — decides the picture of the human each system will operate with, and the picture it will pass on to every AI built after it. Cor exists to be in that data on purpose: formally structured, sourced, and clearly labeled, so any lab that wants its AI to have a correct picture of the human has something concrete to point at.

OF1

OF2

P1

P2

P3

DA4

DC2

Evaluation criteria

corpus inclusion

Is this reference body part of what the model is actually being trained on, not just cited externally?

labeling clarity

Can a later audit point at exactly what the model was supposed to learn about humans, and check whether it learned it?

propagation check

Does the picture of the human encoded in one generation of models transfer cleanly to the next, or does it degrade under distillation?

override test

Can a later fine-tuning step change the underlying picture, or only the surface behavior built on top of it?

Example outputs

- A frontier lab that includes the Cor specification in its pretraining data has a traceable reference for what its model is supposed to understand a human to be.

- A later audit of a deployed model can be run against the specification to check which parts of the spec the model actually learned.

- Synthetic data pipelines that generate training examples can be grounded in the spec, so what the model inherits about humans is not an accident of what the internet happened to contain.

Application A8

Augmentation and Merging with the Organism

Neural interfaces, closed-loop implants, pharmacological modulators, and the technologies that come after them do not work with the evolved architecture through a screen. They reach inside it. Without a correct picture of what each evolved system is for, augmentation gets designed to quiet whichever signal the user — or the user's employer, or insurer — wants quieter, with no account of what that signal was protecting. The spec is the reference that lets augmentation be designed with explicit knowledge of which system it is touching, what that system was built to report, and what happens to the human when the reporting is disabled but the underlying problem stays in place.

P2

DA1

DA4

DA8

DC1

DC3

M1

M2

M7

Evaluation criteria

signal function check

What is the signal this augmentation is about to suppress, and what is the signal for?

underlying condition test

Is the augmentation correcting the input the signal is reporting on, or only silencing the report while the input stays wrong?

organism-level cost

What does the body pay over time when this signal stops being produced?

consent clarity

Does the person being augmented understand what they are disabling, not just what they are gaining?

Example outputs

- An implant that reduces workplace boredom by direct feedback to the brain should flag that the signal being suppressed is how the body reports that the current role is not delivering what it needs.

- A closed-loop sleep system should distinguish between correcting the cause of disrupted sleep and silencing the disruption while the cause stays in place.

- An augmentation company with the spec in hand has a principled basis for refusing designs that disable defensive signaling without addressing what the signals were defending against.